Authors:

(1) Simone Silvestri, Massachusetts Institute of Technology, Cambridge, MA, USA;

(2) Gregory Wagner, Massachusetts Institute of Technology, Cambridge, MA, USA;

(3) Christopher Hill, Massachusetts Institute of Technology, Cambridge, MA, USA;

(4) Matin Raayai Ardakani, Northeastern University, Boston, MA, USA;

(5) Johannes Blaschke, Lawrence Berkeley National Laboratory, Berkeley, CA, USA;

(6) Valentin Churavy, Massachusetts Institute of Technology, Cambridge, MA, USA;

(7) Jean-Michel Campin, Massachusetts Institute of Technology, Cambridge, MA, USA;

(8) Navid Constantinou, Australian National University, Canberra, ACT, Australia;

(9) Alan Edelman, Massachusetts Institute of Technology, Cambridge, MA, USA;

(10) John Marshall, Massachusetts Institute of Technology, Cambridge, MA, USA;

(11) Ali Ramadhan, Massachusetts Institute of Technology, Cambridge, MA, USA;

(12) Andre Souza, Massachusetts Institute of Technology, Cambridge, MA, USA;

(13) Raffaele Ferrari, Massachusetts Institute of Technology, Cambridge, MA, USA.

Table of Links

5.1 Starting from scratch with Julia

5.2 New numerical methods for finite volume fluid dynamics on the sphere

5.3 Optimization of ocean free surface dynamics for unprecedented GPU scalability

6 How performance was measured

7 Performance Results and 7.1 Scaling Results

9 Acknowledgments and References

4 Current State of the Art

We are aware of only three global ocean simulations that have achieved resolutions finer than 5 kilometers — all at tremendous computational expense. In 2014, MITgcm [29] was used to perform the one year, tidal-forced ice-ocean simulation “LLC4320” [45], which exhibits 2.2 km horizontal resolution with 90 vertical levels. LLC4320 achieved 0.047 simulated years per day (SYPD) using 70,000 cores of the NASA Pleiades system.

FIO-COM32 [50] ran at ∼2.5 km (1/32nd degree) horizontal resolution with 90 vertical levels for 3.5 years. [48] ported LICOM3 to GPUs to realize 0.51 SYPD at 1/20th horizontal resolution with 60 vertical levels using 384 MI50 AMD GPUs, and further managed to scale to 26200 MI50s with strong scaling efficiency of 8%.

The largest ocean simulations used in current IPCC-class climate models, which typically require fastertime-to-solution to support longer simulations, have horizontal resolutions of roughly 10 km. [8] describes output from four 60-year ocean simulations following the OMIP-2 protocol with 8 km (1/12th degree), 10 km, and two with 11 km (1/10th degree). [11] report a 110-year simulation at 10 km (1/10th degree) horizontal resolution, the longest high resolution OMIP-2-style run. Some of the highest resolution climate models are the iHESP CESM-based model with 25km10km atmosphere-ocean resolution [51], achieving 3.4 SYPD, and the 50km-10km HadGEM3-GC3.1 submission to HighResMIP [18, 37], achieving 0.4 SYPD.

At 3.4 SYPD, the iHESP CESM achieves sufficient time-to-solution for hundreds to thousands of years of simulated climate. But such a simulation is purchased for a high price, requiring the 40% of the Sunway TaihuLight supercomputer [51] and 4 million cores consuming 6 MW for hundreds of days of wall clock time. Enabling the large ensembles of high-resolution simulations needed to improve climate prediction requires both performance at scale as well as efficient resource utilization.

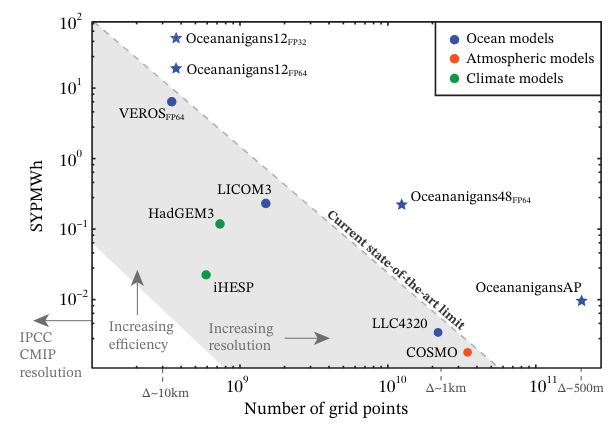

Figure 1 plots simulated years per mega-watt-hour (SYPMWh) against resolution for state-of-the-art ocean models. The SYPMWh metric encodes the efficiency requirement needed to make progress on climate uncertainty with next-generation climate models: in particular, we require both higherresolution models (moving rightwards in figure 1) as well as more efficient models (moving upwards in figure 1). For completeness, we report SYPMWh also for two GPU-based models: Veros [22], an ocean model, and COSMO [15], an atmospheric model. The present nomination is shown with stars from whence we see significant performance gains compared to the existing state-of-the art.

5 Innovations

Our achievement is three-fold: first, using new software written in the Julia programming language called Oceananigans.jl [35], we report a near-global ocean simulation with highest-ever horizontal resolution (488 m) reaching 15 simulated days per day (0.04 SYPD). Second, Oceananigans performs this simulation with breakthrough memory efficiency on just 768 NVidia A100 GPUs, and thus a fraction of the available resources on current and upcoming exascale supercomputers. Third, and arguably most important, Oceananigans achieves breakthrough energy efficiency, simulating the global ocean at 0.95 SYPD with 1.7 km resolution on 576 A100s, and at 10 km — the highest horizontal resolution employed by an IPCC-class ocean model — achieving 9.9 SYPD on 68 Nvidia A100s. This final milestone proves the feasibility of routine climate simulations with 10 km ocean components, a crucial resolution threshold at which ocean macroturbulence (the most energetic ocean motions with scales between 10–100 km) is fully resolved.

We attribute these achievements first and foremost to a high-risk, high-reward strategy to develop a new ocean model from scratch in Julia with a specific focus on GPU performance and memory efficiency. Additional crucial ingredients include advances in numerical methods for finite volume fluid dynamics on the sphere and a novel optimization for simulating ocean free surface dynamics that achieves unprecedented GPU scalability.

This paper is available on arxiv under CC BY 4.0 DEED license.